Overview

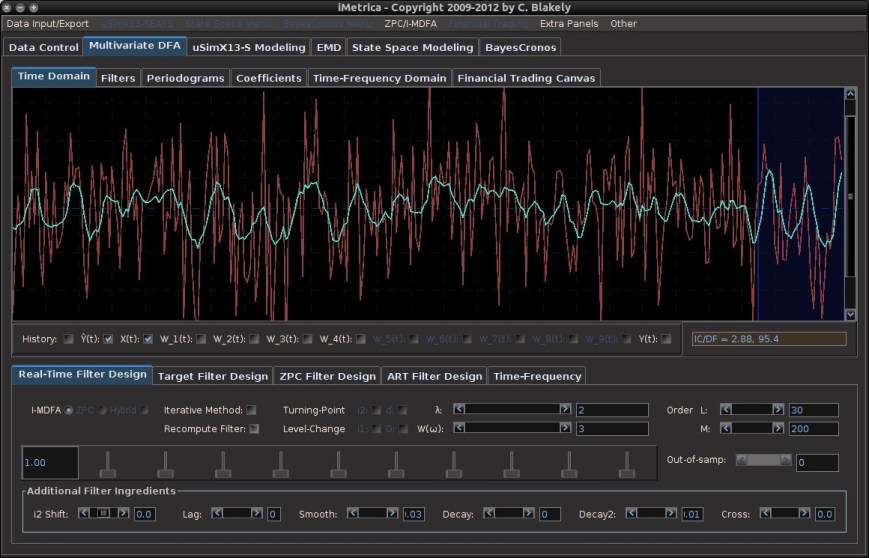

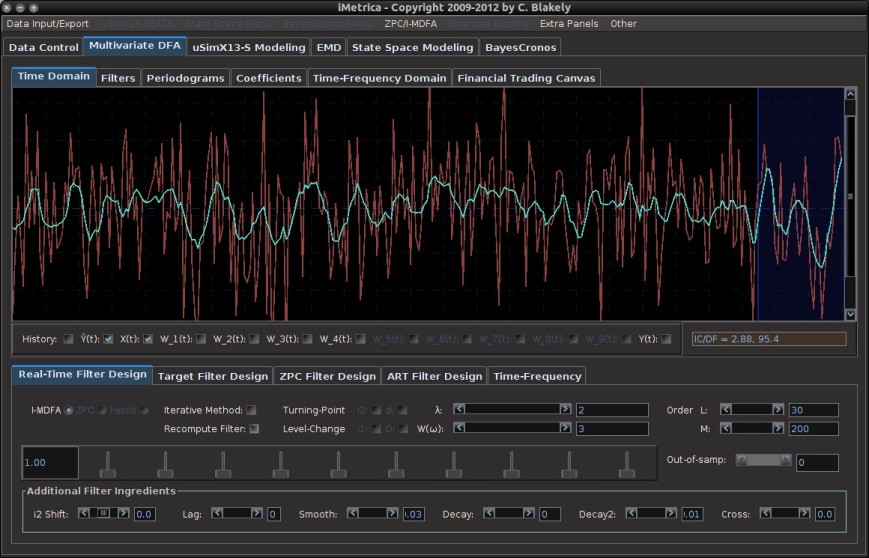

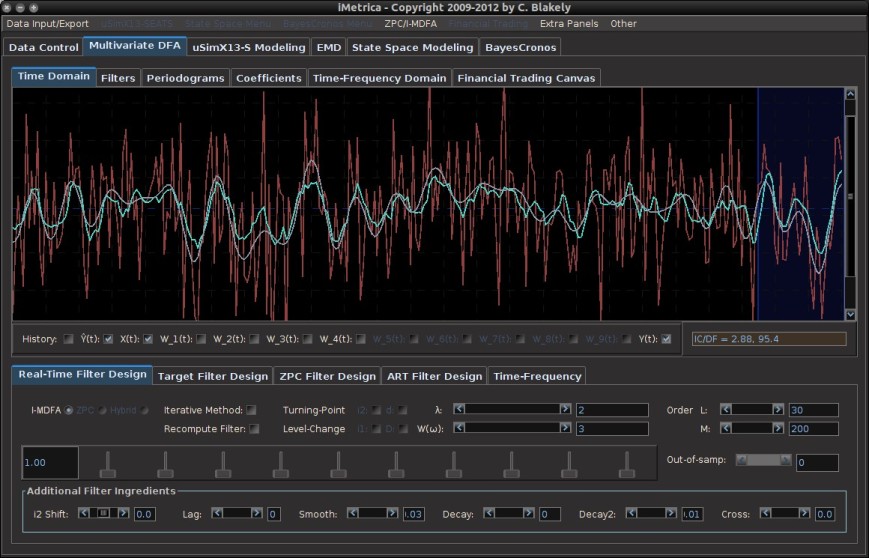

MDFA-DeepLearning is a library for building machine learning applications on large numbers of multivariate time series data, with a heavy emphasis on noisy (non)stationary data. The goal of MDFA-DeepLearning is to learn underlying patterns, signals, and regimes in multivariate time series and to detect, predict, or forecast them in real-time with the aid of both a real-time feature extraction system based on the multivariate direct filter approach (MDFA) and deep recurrent neural networks (RNN). The feature extraction system utilizes the MDFA-Toolkit to construct K multivariate signals in real-time (the features) where each of the K features targets a certain frequency range in the underlying time series. Furthermore, each (or some) of these features can also be forecasted multiple steps ahead, or smoothed, creating many possibilities of signal or regime learning in time series.

For the deep learning components, in this package we focus on two network structures, namely a recurrent weighted average network (RWA Ostmeyer and Cowell) and a standard long-short term memory network. The RWA cell is a type of RNN cell that computes a recurrent weighted average over every past processing timestep, unlike standard RNN cells which only process information from previous timesteps.

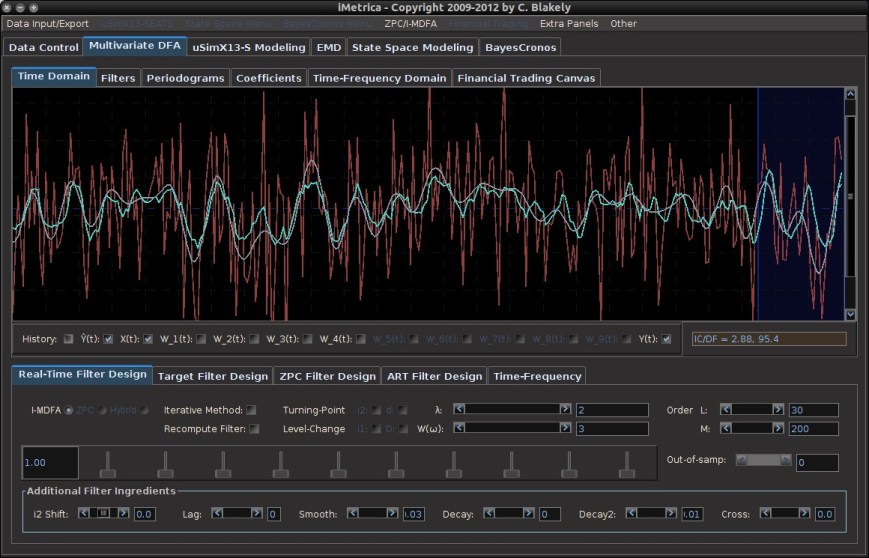

The overall general architecture of the proposed network is given in Figure 1 in the case of an RWA network, which we will discuss in more detail below. For a given sequence of N multivariate time series values which have been transformed appropriately to a stationary sequence, which we denote Y_1, Y_2, …, Y_N, a real-time feature extraction process is applied at each observation which is then used as input to an RWA (or LSTM) network, where the univariate output is a targeted signal value (regression) or a regime value (classification)..

Figure 1: Proposed network design using RWA cells to learn form the real-time feature extractor. The Y_t values are the (multivariate) transformed time series values, and the S_t are univariate outputs describing a target signal or regime

Why Use MDFA-DeepLearning

One might want to develop predictive models in multivariate time series data using MDFA-DeepLearning if the time series exhibit any of the following properties:

- High-Dimensionality (many (un)correlated nonstationary time series)

- Difficult to forecast using traditional model-based methods (VARIMA/GARCH) or traditional deep learning methods (RNN/LSTM, component decomposition, etc)

- Emphasis needed on out-of-sample real-time signal extraction and forecasting

- Regime changing in the underlying dynamics (or data generating process) of the time series is a common occurrence

The MDFA-DeepLearning approach differs from most machine learning methods in time series analysis in that an emphasis on real-time feature extraction is utilized where the features extractors are build using the multivariate direct filter approach. The motivation behind this coupling of MDFA with machine learning is that, while many time series decomposition methodologies exist (from empirical mode decomposition to stochastic component analysis methods), all of these rely on either in-sample decompositions on historical data (useless for future data), and/or assumptions about the boundary values, neither of which are attractive when fast, real-time out-of-sample predictions are the emphasis. Furthermore, simply applying standard recurrent neural networks for step-ahead forecasting or signal extraction directly on the original noisy data is a useless exercise – the recurrent networks typically will only learn noise, producing signals and forecasts of little to no value (in most cases, the latter).

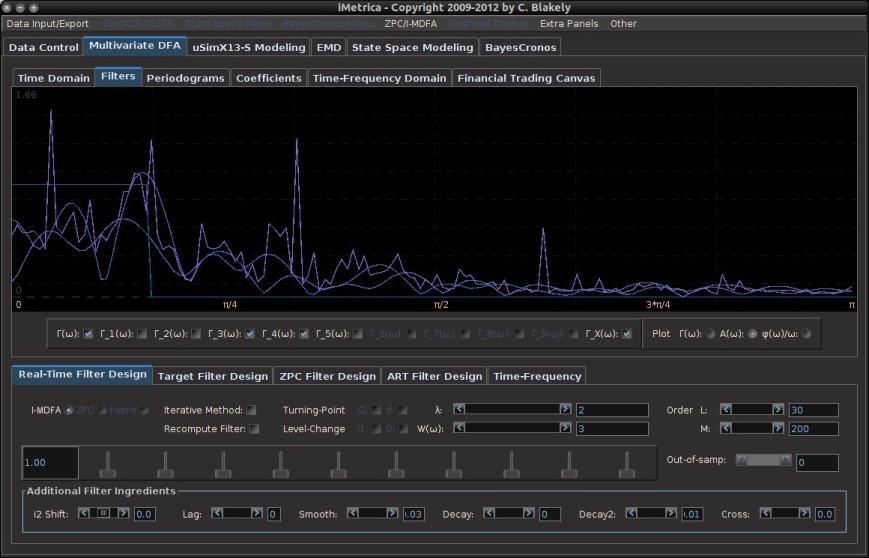

As mentioned, the back-end used for the novel feature extraction is the multivariate direct filter approach (MDFA), and is used to extract both local (higher-frequency) and global (low-frequency) features in real-time, out-of-sample, and output these features in a multivariate time series as inputs into an RWA or LSTM recurrent neural network. Thus the package is divided into essentially four different components which all need to be defined properly in order to produce predictive models for time series data:

- Labeling interface

- Feature extractors

- DataSetIterator interface

- Learning interface

Labeling interface

The package includes an interface for labeling time series. The labeling process takes segments of historical data, and labels each time series observation in some manner. There are three types of labels that can be used:

- Observational labeling: every time series observation is labeled by a signal value (for example a target value computed by a symmetric target filter). This is sequence-to-sequence labeling for time series regression.

- Fixed Period labeling: every period (day, week, etc) is labeled, typically by a one-hot vector. This is sequence-to-value labeling. The end of the period is labeled and the rest of the values are not (masked by nonvalues in the code).

- Regime labeling: every value in a specific regime is labeled, either by a one-hot vector (for example, long (1,0) short (0,1) neutral (0,0), or trend (1,0) and mean-reverting (0,1)). This is another example of sequence-to-sequence, but using one-hot vectors and now in the form of sequence classification.

Other labeling strategies can certainly be used, but these are the three most common. We will give an outline on how to create a custom labeling strategy in a future article.

Feature Extractors

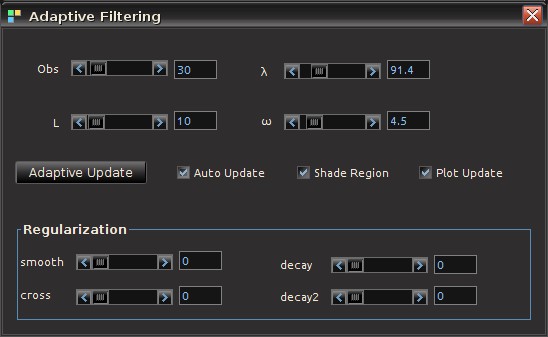

The package contains a feature extraction class called MDFAFeatureExtraction which, when instantiated, is used as the input to the a DataSetIterator. The MDFAFeatureExtraction contains a default automated feature extraction builder where a value of K is given as the number of features and a lag to indicate smoothing or forecasting steps-ahead.

One application of the MDFA feature extraction tool is to decompose a multivariate time series into K components in real-time which are close to being “orthogonal”, meaning in this sense that the frequency information from each of the components are relatively disjoint. A precise mathematical formulation of this property and examples of the MDFAFeatureExtraction to follow. Another example used for turning-point detection in trends is to decompose the multivariate series into K number of low-frequency components with different speeds and forecast/smoothing characteristics.

DataSetIterator

The DataSetIterator is an interface for ND4J that handles fast N-d array manipulation akin to numpy in Python. More specifically, the DataSetIterator handles traversing through a dataset and preparing data for a recurrent neural network. Our datasets in this package are outputs from the TimeSeries through the MDFAFeatureExtraction objects which then become the input to the RNN network. The DataSetIterator also performs the labeling and how output will be arranged. Thus it is essentially the communication from the underlying time series to the extraction process and then how it is treated as input and output to the RNN. In the package, we have designed two examples of DataSetIterators, one for regression and one for classification, that will be described in more detail in a later article.

Learning interface

Finally, the learning interface is where the final network is defined, all the parameters of the network, the activation and loss functions, and number/type of layers (LSTM, FeedForward, etc). The underlying computational framework for this component uses DeepLearning4J.

Requirements and Example

MDFA-DeepLearning requires both the MDFA-Toolkit package for constructing the time series feature extractors and the Eclipse Deeplearning4j (dl4j) library for the deep recurrent neural network constructors. The dl4j library is freely available at github.com/deeplearning4j, but is included in the build of this package using Gradle as the dependency management tool.

The back-end for the dl4j package will depend on your computational hardware, but is available on a local native basis using CPUs, or can take advantage of GPUs using CUDA libraries (CUDA 8.0 was used to test current version of MDFA-DeepLearnng). In this package I have included a reference to both versions (assuming a standard linux64 architecture).

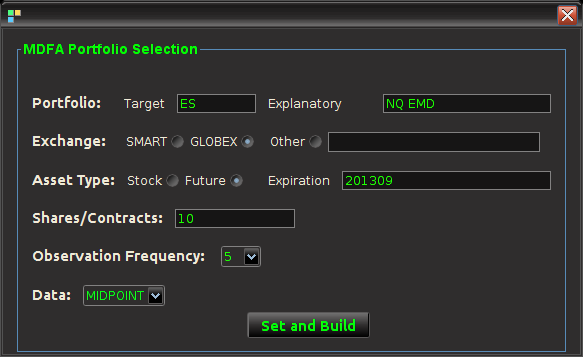

The back-end used for the novel feature extraction technique, as mentioned, is the MDFA-Toolkit (available here), which will run on the ND4J package. The feature extraction begins by defining K MDFA objects, called MDFABase from the MDFA-Toolkit, with the fixed parameters set for each MDFA object. For example, here we define K=4 MDFABase objects, that will be used to extract different types of trends at different speeds in a fractionally differenced time series. Please refer to the MDFA-Toolkit documentation for more information on the definition of each MDFA parameter.

MDFABase[] anyMDFAs = new MDFABase[4]; anyMDFAs[0] = (new MDFABase()).setLowpassCutoff(Math.PI/8.0) .setI1(1) .setSmooth(.2) .setLag(-3) .setLambda(2.0) .setAlpha(2.0) .setFilterLength(5); anyMDFAs[1] = (new MDFABase()).setLowpassCutoff(Math.PI/10.0) .setLag(-2) .setAlpha(2.0) .setFilterLength(5); anyMDFAs[2] = (new MDFABase()).setLowpassCutoff(Math.PI/4.0) .setDecayStart(.1) .setDecayStrength(.2) .setLag(-1) .setFilterLength(5); anyMDFAs[3] = (new MDFABase()).setLowpassCutoff(Math.PI/14.0) .setSmooth(.2) .setDecayStart(.1) .setDecayStrength(.1) .setFilterLength(5);

More concrete, in-depth step by step examples and tutorials will be given in the source code on github and in this blog, but here we will just give a brief overview on an example main program using these features.

/* Define the .csv data file from where we built train/test dataIterators */

String[] dataFiles = new String[]{"AAPL.daily.csv"};

/* Information about the .csv timeseries file */

TimeSeriesFile fileInfo = new TimeSeriesFile("yyyy-MM-dd", "Index", "Open");

/* Define network parameters */

int miniBatchSize = 100;

int totalTrainExamples = 1500;

int totalTestExamples = 300;

int timeStepLength = 60;

int nHiddenLayers = 2;

int nHidden = 216;

int nEpochs = 400;

int seed = 123;

int iterations = 40;

double learningRate = .001;

double gradientNormThreshold = 10.0;

IUpdater updater = new Nesterovs(learningRate, .4);

/* Instantiate Feature Extractors as an array of MDFABase objects */

MDFAFeatureExtraction features = new MDFAFeatureExtraction(anyMDFAs);

/* Instantiate a new RecurrentMdfaRegression network using the features defined above */

RecurrentMdfaRegression myNet = new RecurrentMdfaRegression(features);

/* Set the data and the DataIterator parameters */

myNet.setTrainingTestData(dataFiles, fileInfo, miniBatchSize, totalTrainExamples, totalTestExamples, timeStepLength);

/* Usually a good idea to normalize the data */

myNet.normalizeData();

/* Build the LSTM (default network) layers */

myNet.buildNetworkLayers(nHiddenLayers, nHidden,

RecurrentMdfaRegression.setNeuralNetConfiguration(seed, iterations, learningRate, gradientNormThreshold, 0, updater));

/* An optional dl4j control panel to in the browser */

myNet.setupUserInterface();

/* Train on the number of Epochs */

myNet.train(nEpochs);

/* Print/plot results and stats */

myNet.printPredicitions();

myNet.plotBatches(10);

The main points here are that essentially three components need to be defined:

- The .csv time series data file from which the DataIterator will extract the time series data for both labeling and learning. Two data sets will be created from this, a train set and a test set. Referrencing to multiple files from which to extract training and test sets is also possible. In dl4j, training and test data is built in the form of a DataSetIterator interface (org.nd4j.linalg.dataset.api.iterator). In the package, we have defined a MDFADataSetIterator and a MDFARegressionDataSetIterator. More DataSetIterators for various applications will be added on an ongoing basis.

- The network RecurrentMdfaRegression is initiated, and needs to contain the feature signal extractors. Any set of feature extractors can be added, here we used the ones defined above as an example.

- Finally, the LSTM (or recurrent weighted average) network parameters need to be defined, this will then be used to construct the layers of the recurrent network.

With these three steps defined the network should be ready to train and test. The challenge is of course defining the feature extraction parameters. In later articles, we will give tips and tricks into what works best for what type of learning applications in large time series.